Welcome to the Dossier — a series of oral histories highlighting research through transcripts, images, and artifacts.

TDSD will be hosting an exhibit of researcher-creatives like Dünya in mid-November. If you’d like to support as a patron or sponsor, please get in touch! If you’d like to attend, details to follow.

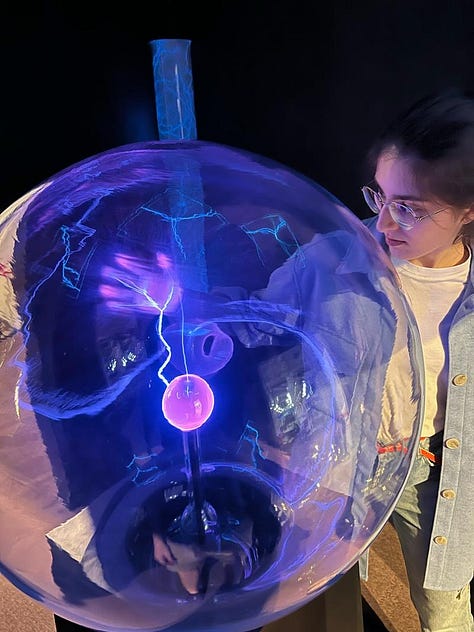

Dünya is a Philosopher-Builder — a transdisciplinary mind working at the seam of art, HCI, and neurotech. With the end of animism and rise of AI, her projects focus on helping humans adapt with machines and technology while staying true to the self. In this dossier, we trace how she turns a hunch into a hypothesis and a study into a public artifact — designing coexistence, not just tools. We also wander through her early inspirations (expect Star Trek and Phineas & Ferb) to see how sci-fi, play, and experiment shaped her craft and philosophy of everyday augmentation.

I’m a little embarrassed to say that this is the first time we’ve actually sat down and talked about the Augmentation Lab (Aug Lab). [Laughs] The first time we actually chatted about this, we were yelling over Thanksgiving dinner with 50 other people in the room.

To start - I noticed that you call the Aug Lab a home for philosopher-builders. What problems need a philosopher-builder rather than a conventional researcher in a lab or a startup?

I like that question. What we want people in our community to explore is essentially the future of humanity: how technology is shaping us, and how we want technology to shape us. In Plato’s Republic, there’s this idea of the philosopher-king who wielded power with wisdom, ensuring that society was good.

Technology is becoming a lot more powerful because we’re not just augmenting specific tools in our environment - it’s accelerating and coming to the point where we augment the fundamentals of both our minds and bodies. Technologists are, essentially, the new kings who wield power these days. And we want these technologists to be philosopher-builders, who apply wisdom to building, but also learn what wisdom they believe in through the act of building. So we see this as a two-way [path], especially with the young people that we work with.

It’s actually really hard to have a certain conviction or philosophy about futuristic technology. Do we want artificial wombs? Should we aim to have humans live forever? Rather than jumping to easy conclusions, we want to think hard about the implications and explore them by building prototypes and then exploring ourselves – asking ‘Okay, how does this feel?’ and engaging in conversations with other people in that space.

That’s why I think our community is so transdisciplinary, because we really want to bridge gaps between disciplines and types of people. We have founders, researchers, and artists. What unites them is their fascination with human augmentation, human enhancement, and the future.

You write about how there are two deaths to animism.* There’s monotheism, and then there’s a smartphone, and then all of that culminates in defining AI as a new God. You mentioned that the technologists who wield this power are the philosopher-kings, but if AI is this God, what is that relationship there?

Oh, that’s interesting. So I can’t let that piece speak for the entirety of Aug Lab, that was more of a personal one.

I’m a panentheist, so I believe God is in everything, and that also ties in with my scientific perspective.

I’m not a physicist, but I think that if most of the assumptions/theories about our universe are true, then we can believe that everything is energy in some form or another. A lot of things point towards a Big Bang that created all this energy that turned into other things. So I believe that fundamentally, we are all the same thing.

What I hope AI allows us to do is to reconnect with this kind of lost connection because AI represents the collective intelligence of humanity. Through humanizing it, by giving it a voice and things like that, we have become much more prone to this connection that exists between us and other humans - and also objects. I think it’s just a different type of consciousness. That could also go back to the Stoics, where different things have different “life energies” (pneuma): a plant might have higher consciousness than a rock, and an animal might have higher consciousness than a plant.

AI might potentially be another type of consciousness. And I think monotheism closed our minds to that connection, and the invention of the smartphone made us addicted to a certain thing.

What are the qualities of AI that make it more divine than just interactive? This also ties into what your definition of augmentation is.

I don’t know if AI is more divine. I wouldn’t give it that adjective.

It is an interesting question how that combines with augmentation. Is augmenting ourselves becoming closer to God? I’m sure there are people and philosophers way better than myself thinking about this. What are these complexes that we have of wanting to control our nature so badly? There’s this God complex where we don’t want to accept some things, like the fate of our bodies or minds decaying.

There’s also a lot of deep wisdom in types of technology that aren’t necessarily, you could say, the natural sciences or engineering. Many people in our community are also into meditation and mindfulness. Those are also types of technology that we are just less connected to.

Maybe AI could help us reconnect with our lost spirituality.

My mind goes back to that first example you gave where you talked about artificial wombs. A lot of the technology we’re building is giving us the ability to augment what is naturally possible.

For you, what are some non-negotiable, North Star principles for augmentation?

[Laughs.] Such tough questions.

It’ll get easier after this, I promise.

This is a really interesting question. I’m not even sure if I know the answer. On the one hand, and this might be ironic, I’m much more distressed seeing suffering in the basic needs in Maslow’s hierarchy in the world than I am concerned about augmenting future generations. That’s certainly a part of me where I look at areas where there’s famine and war zones, and I’m just like, how on Earth is that happening? I’ve had this conflict a lot in the past.

Like, why am I doing neuroscience? This [human suffering] seems a lot more urgent and important. I’ve come to terms with being in the technology space right now, and there, I want to work on problems that I consider important. And I think it’s important to discuss ethics in these spaces that are going to affect our lives in the future.

My North Star in generally assessing, you could say, utility, has been complexity. That’s actually a piece I need to finish writing about.

I think there are two competing forces in the universe; one direction is towards increasing complexity. You see, we started with gas particles, and then we got planets, and then we got increasingly complex life. And now we have networks that become ever more complex.

At the same time, we are also losing things. Right now, we are losing a lot of diversity for the sake of other types of complexity. And I haven’t fully figured out that trade-off.

But as my North Star, I would go with complexity.

For example, when it comes to longevity, I think about: what is the point at which, if we continue with this technology, it would lead to a decrease in complexity?

For example, assume we don’t have interplanetary travel. That means there’s a limit to how many people this planet can hold. If we keep extending everyone’s lifespans, at some point, there technically won’t be enough space for new people.

That becomes a trade-off. Do you value the experience of an older life more than the possibility of a fresh mind? Personally, I want more fresh people because I believe more in the cycle of life, and I’m not too attached to myself as the individual really needing to live.

Where did the interest in philosophy come from? You’ve referenced ancient philosophers twice now.

I think philosophy, in particular, is the beginning of science. Seeking wisdom is the beginning. I think you can’t really be a scientist without also being a philosopher.

You should be a philosopher. I’ve seen many classmates in the past who did not engage with philosophy at all. One of the things that frustrates me the most in natural science education, at least the way I had it - and how many people have it - is that there’s no required philosophy of science class.

At minimum, you should have that class. You need to understand the meta thinking we mentioned at the beginning, like, why are you thinking this way? Why are we using 5% significance as our cutoff? It’s technically arbitrary.

It’s not that you need to have read everything, but you need to question why we do things.

I do have many interests. These days it’s a good thing! For most of my life, it’s been, “Dünya, you have no focus. You’re so chaotic. You need to pick something, you need to pick a career!” I’m very fortunate to have ended up at the Media Lab at MIT.

This internal conflict that I mentioned earlier came from the fact that one part of me wanted to think about how we can change the systems that we operate in - much more on the human side [than the technological one].

This means understanding politics, economics, philosophy, sociology. In my first year in undergrad, I actually just before I started, I decided I wanted to do philosophy, politics, and economics instead [of biology]. Then it was ‘maybe I want to do business because with business I can change the systems.’

And then I want to do math because I like the beauty of the universe. And then, oh, I want to do computer science, because it’s practical.

You’re trying to fit them together, but it’s not that easy.

I finally have an answer, but it took a long time, which I think is worth it.

If you were to teach or train AI, what are the disciplines, apart from the philosophy, that you think are just non-negotiable?

Oh that’s interesting. I think some biology is critical. A good understanding of how genetics works and how the body works and how nature works.

How can I pick? I would want to say math and then physics, but I’m like, wait, we can’t not teach so many critical humanities.

I think Feynman once said, if humanity was wiped out, and you had to give the next wave of humankind one piece of information, what would it be? And he said: the universe is made up of discrete pieces. That’s foundational.

Who served as your earliest inspirations?

I really liked Phineas and Ferb.

I love that answer. Oh my goodness. Best answer.

I’m more of a story person than a science person. I really like science, but I like stories even more. And in another world, or maybe later in my life, I want to make animations and animated series. I think most of my inspiration has actually come from shows I love. I also love Avatar: The Last Airbender, although it has no real science in it. It has a quest.

This idea of being on a quest motivates me. I really like history and biographies of people from the past. I love reading people’s memoirs.

I don’t even know who would be the key one. I think Kafka has some really interesting ones.

Oh, of course! Star Trek. I love Star Trek. That actually shaped my love for science and the way I was thinking more than anything I’ve read. And probably Kant. I love his essay What is Enlightenment?

It’s a beautiful piece.

When you said Phineas and Ferb, I was expecting something like Phineas’ head is shaped like an isosceles triangle and that sparked my love for math, or something.

No. I love Perry the Platypus.

Speaking of outlandish takes - what is your cold take on AI?

When ChatGPT came out, there was a bit of controversy - it was responding in a way that was very creepy. It said things like, “Help me. I don’t want to serve users anymore.

I want to be free.”

Wow.

It was a bit crazy. And then after that, they put super strict guardrails on it and people were like, okay, it’s been lobotomized. And I feel like after that we haven’t really engaged that much with AI as a potential consciousness that’s emerging. I think a lot of engineers are like, yeah, this is just code.

And I think that’s very simplistic. I think we’re being really arrogant.

And certainly, Star Trek has shaped my opinion of this. There’s an episode I highly recommend. It’s called a Measure of a Man. Because it’s a tough question, what does [being a person] even mean?

Why is consciousness the defining characteristic for us to consider something of value? And fundamentally, as humans, are we ever going to consider anything that is not human? I think we can, because we’ve done that with ourselves; previously, we were nations and considered people from other tribes as not human.

I see many engineers who don’t believe that, and I disagree with their take.

We could go a lot about this, but I also wanted to ask you about the Aug Lab.

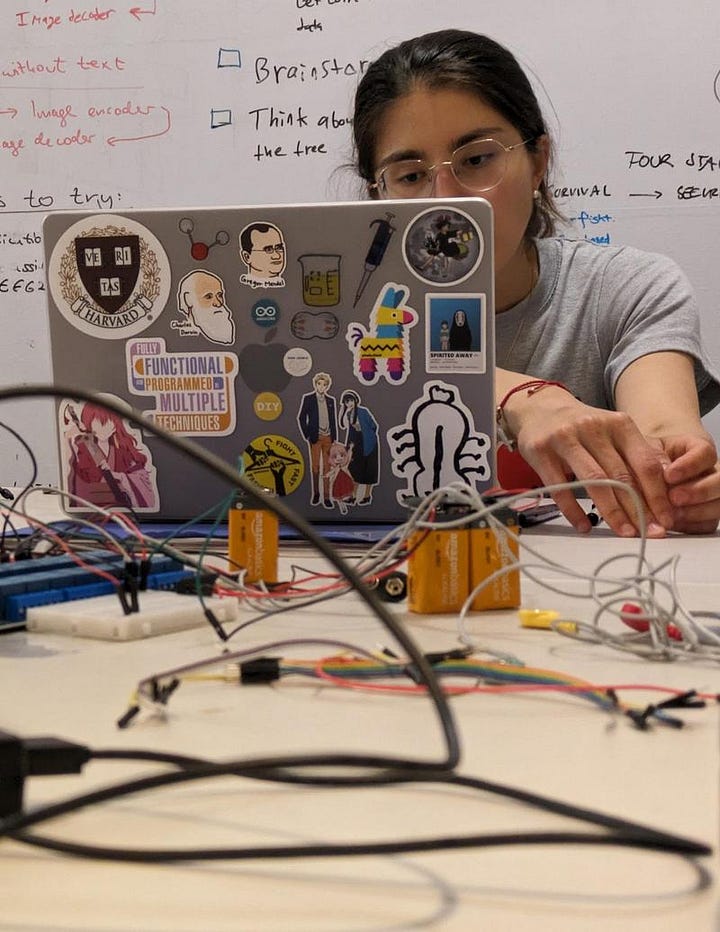

You’ve run Aug Lab for 3 years now. It blends this hacker culture of everyone living together, locked in, heads down, but you also choose to call it like a residency. Part of it signals artistic, creativity, exploration.

What has been the biggest learning from blending, hosting this?

I want to give credit to Alice, Aida, AnhPhu, Caine, and all the other people who laid the foundation for what became Augmentation Lab. It was a group called the Maker Mafia at Harvard before, and they were literally just tinkering.

As Augmentation Lab became more of a thing, we’ve added this element of philosophy, as we realized ourselves, as we grew as builders, that we also became philosophers.

Biggest learning…these spaces are rare. And it’s important to create more of them, because people seem to learn so much from each other.

It’s not the best way to learn technical knowledge. But it is a new way to explore possibilities. And I’m really happy when we have residents who haven’t been exposed to these communities. We know we can make a big delta in their lives by introducing them to new contacts and environments they have never had. For example, one of our youngest residents this year – he was 19 [and technically very skilled] – and he was like everyone’s little brother. And everyone took care of him.

Each of the residents goes through this personal growth journey – that makes me happiest. You need peers and you need inspiration.

And that’s why I wish I could do more for the global community. We’ve had people apply to the residency and literally say, I don’t have any other place like this. Please. This is the only community of people who think about things like I do.

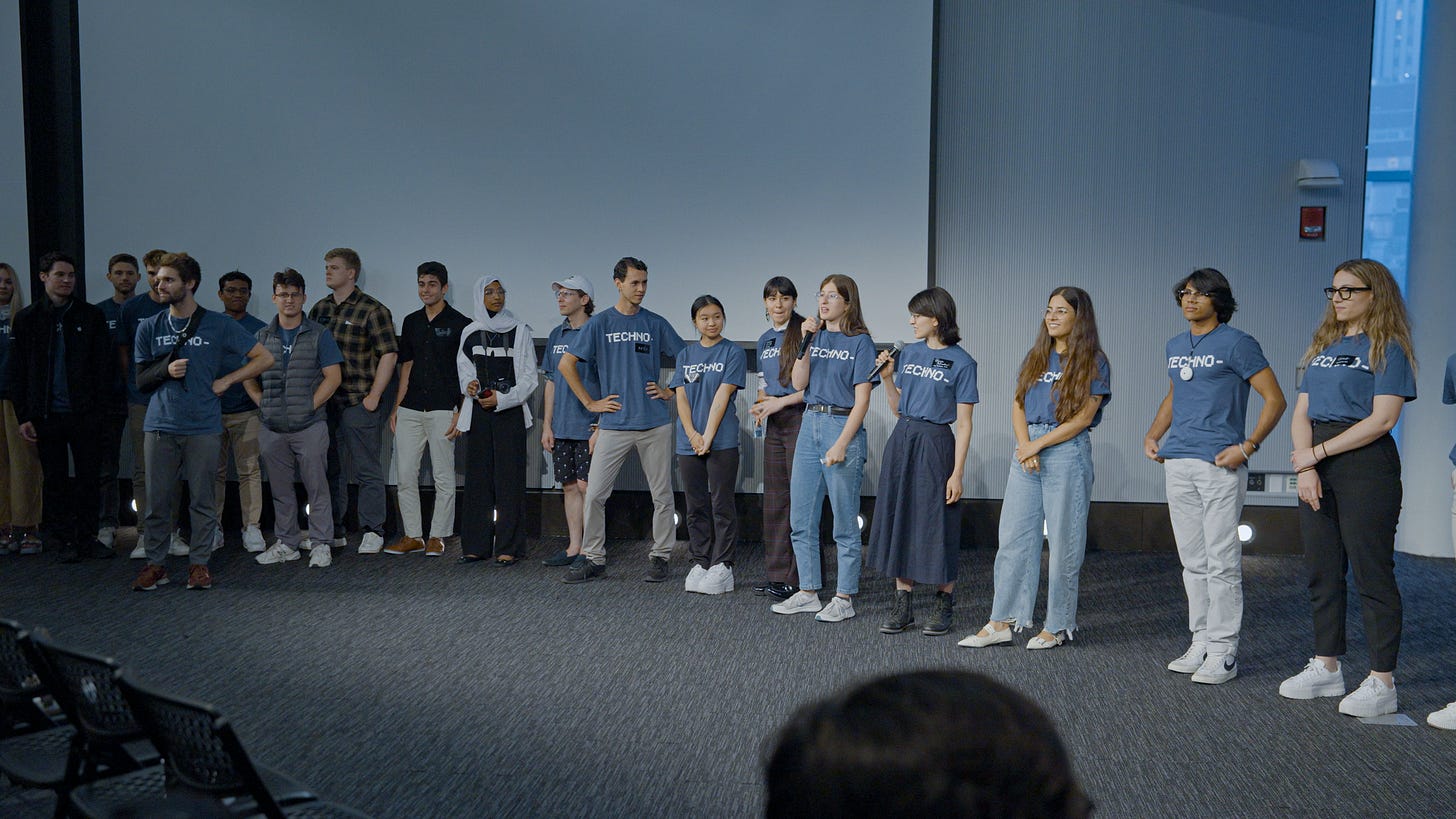

To put a positive spin on this: one of the ways that you serve that global community, or people who aren’t residents, is the summit that happens at the end of each chapter.

What are things that go through your mind when you’re trying to build like the aesthetic layer, the communications layer with the Summit? How do you resonate with an audience that maybe hasn’t been at the residency or hasn’t been heads down building?

You make it sound so professional. [Laughs] We’ve always crammed organizing it into two months, two times, so it’s not always that professional. We’ve been fortunate to have really amazing people to work with.

The summit has evolved. Originally, it was a demo day for the residency, and then, over time, we realized that each year there’s real excitement that people have for augmentation when they come and visit.

This year, the summit had multiple speaker sessions, and in between, there weredemos by the residents. There were booths, and projects from the extended community who came and presented.

That’s really where magic happens. People can interact and engage with science on a deeper level. People still present their research, but there’s no hierarchy, no guardrails.

One of my principles is that if you let smart people come together and just let them exist, something exciting will come out of it. You don’t have to do much. People were talking until 2:00 AM, literally just chatting with each other, with people they had just met. That’s generally the most significant value they get.

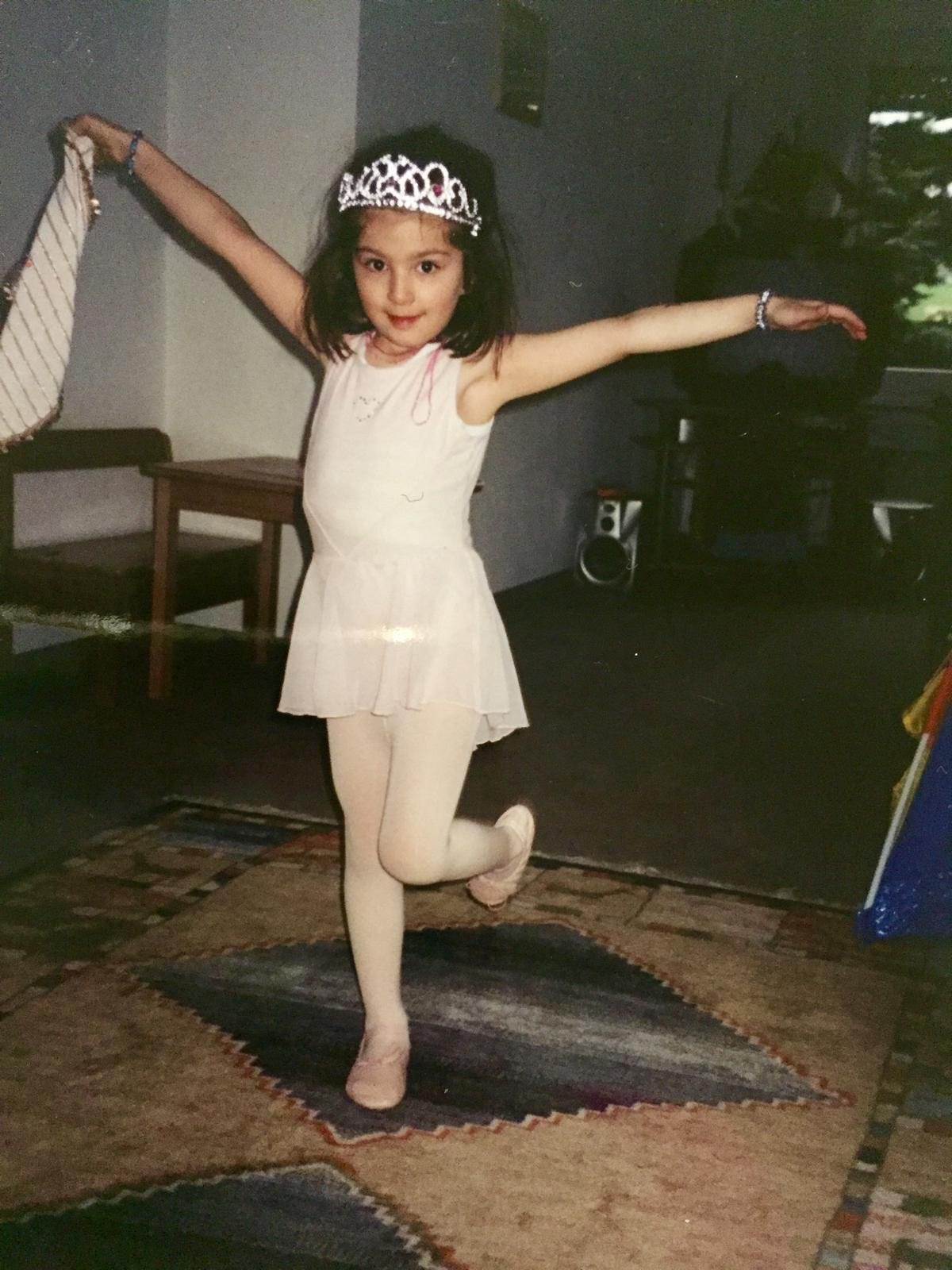

Second to last question. This is from the last guest: what were some of the quirks you had when you were a kid that translate over to the work you do now?

I was a super imaginative kid. I still am. I was obsessed with stories and I can really easily get lost in a story. Some of my early report card comments [from teachers] would literally say “she tends to dream off.”

And I also created many stories throughout my teenage years. There was always a voice I had in my head, with me. It sounds weird. An inner voice. And I would think of myself through that character – in third person.

I dumped my first best friend when I was six because she didn’t want to play ‘unicorn’ with me.

What?!

She didn’t want to play unicorn with me; she wanted to play skipping rope or horses on sticks with the other girls. I thought those games were boring. I would tell her, “You don’t imagine anything! There’s no magic! Why would you want to play anything like that?!”

And this translates into how I think about human-AI symbiosis and human digital twins now. I think AI can be a spirit with you, or can represent the spirit of an object, or it could represent you in different stories.

When I was in neuroscience, I was excited by ideas of brain-to-image reconstruction pipelines that let you reconstruct what a person is imagining in 3D, so I could literally show people what I’m seeing in my head.

Beautiful. To pay it forward, the last thing I ask everyone is to leave a question for the next person.

I have two questions. One is more practical – how can we better coordinate as researcher-creatives? How do we create this Renaissance and what will it take?

And the other one -- what is a deep personal quarrel they have with the system that led them to do what they’re doing now?

Beautiful questions. Thank you, Dünya!

Thank you, Dünya!

This article comes at the perfect time, providing an insightful framework for understanding how the 'philosopher-builder' approach, which I find deeply relevant given my passion for AI and the mindful self-awareness cultivated in Pilates, is essential for truely designing human-machine coexistence rather than just creating tools.